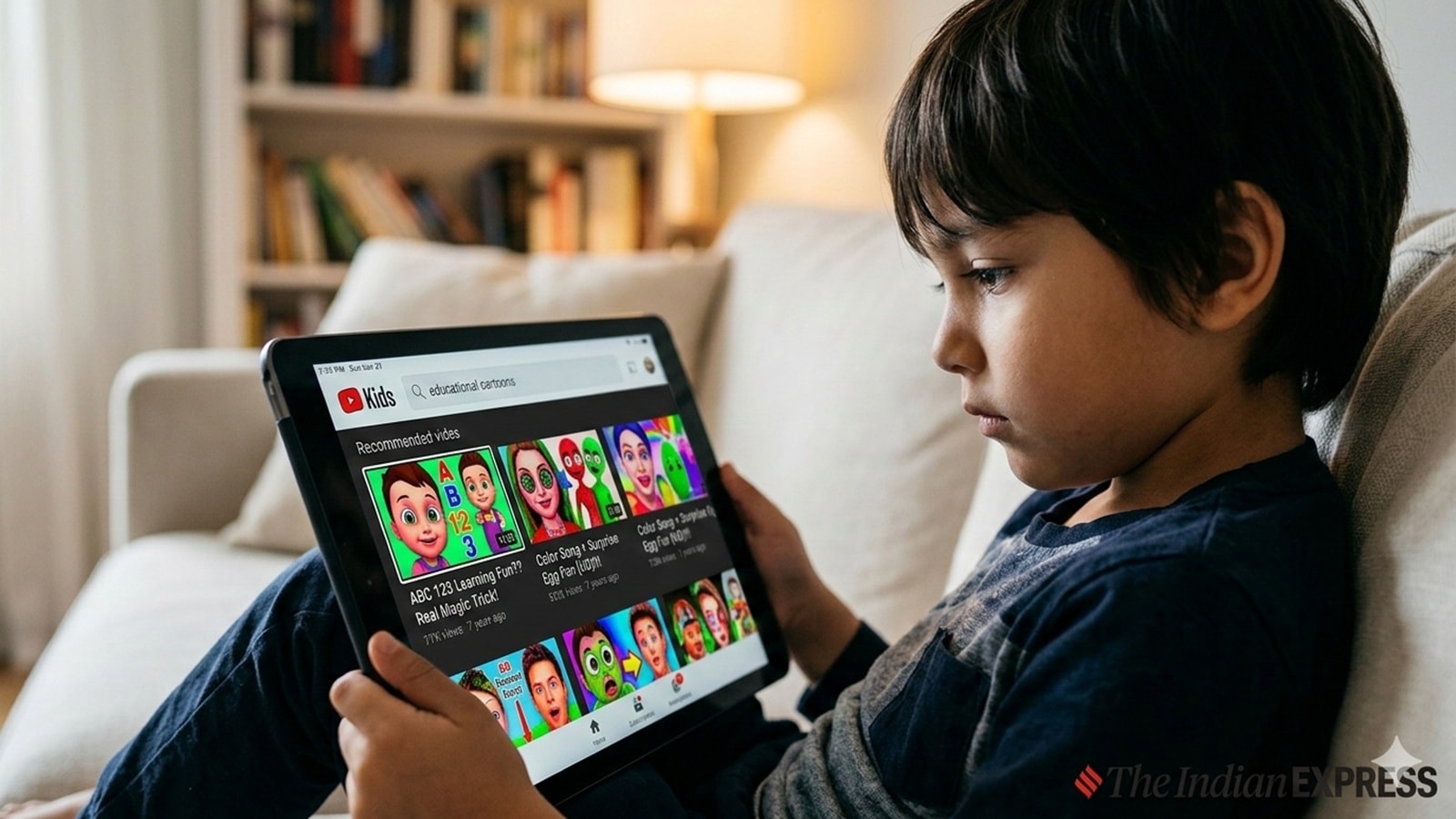

How AI-generated videos are distorting your child’s YouTube feed

In some videos, animals and people have warped faces or extra body parts. Often, the videos contain garbled text. Most clips have incoherent narratives, some riddled with misinformation. And none are longer than about 30 seconds, allowing little time to develop ideas, plots or any sense of repetitio

In some videos, animals and people have warped faces or extra body parts. Often, the videos contain garbled text. Most clips have incoherent narratives, some riddled with misinformation.

And none are longer than about 30 seconds, allowing little time to develop ideas, plots or any sense of repetition that is often necessary for learning. Now produced with the help of readily available AI tools and online tutorials, many of these videos have millions of views and counting, with channels churning out videos at a rapid rate, sometimes even multiple times a day. Many of the YouTube accounts producing AI-generated videos reviewed by the Times specifically target the youngest of viewers and their parents, marketing their channels as “educational” as opposed to entertainment.

Creators are profiting off this content with little oversight from YouTube.

“To me, the meaninglessness of these videos is a huge problem because they’re just attention capture,” said Dr. Jenny Radesky, a developmental behavioral pediatrician and associate professor of pediatrics at the University of Michigan Medical School.

“And then the worst case is that it’s so fantastical and full of attention capture that it is going to be cognitively overloading to the child.” Radesky and others raised concerns about hyperrealistic AI content, especially for children who are too young to be able to distinguish fantasy from reality. McCall Booth, a developmental psychologist and researcher at Georgetown University, said children “may have a harder time in the future identifying fake content because their mental schema had already adapted to include improbable but aesthetically realistic character actions.”

Even on YouTube Kids, which is intended to provide a more controlled digital environment for children, these kinds of AI videos are easy to find. Last summer, videos of AI-generated animals diving into pools was even a TikTok trend. Rachel Barr, a developmental psychologist and director of the Georgetown University Early Learning Project, pointed out that the pool-diving videos in particular contain a lot of conflicting information for young children who may have a hard time deciphering what is real.

“The animal could be real. The pool could be real, but again, it’s a mismatch between what should happen in the real world between those two things. So that is going to place a lot of this cognitive load on the child to try and map those things together,” Barr said.

“It may seem like it’s innocuous,” she added.

“But that is not going to help them learn either about swimming or giraffes or ‘G.’” Radesky explained that well-crafted media serves as a mirror and helps reflect the world that children already know, back to them. Shows like “Mister Rogers’ Neighborhood” or “Sesame Street,” for example, intentionally try to help make sense of the world — not only through letters and numbers, but also through emotions and learning about interpersonal relationships. The American Academy of Pediatrics issued a guide for parents on how to select media content for their young children, telling parents to avoid content that is either AI-generated or highly sensationalized.

The guidance also cautioned against consuming short-form videos. While there aren’t many studies yet on how short-form media affects young children, Barr said that for children under the age of 5 whose attention systems are still developing, the videos move too rapidly, and usually aren’t long enough to include any meaningful context or story plot. The Times focused primarily on YouTube Shorts when conducting its analysis of AI videos, as most AI tools default to short-form video and offer vertical formatting options.

Over the course of several weeks, the Times watched videos from popular children’s channels on YouTube like CoComelon, “Bluey” or Ms. Rachel from a private browser at different times throughout the day. Then we scrolled through the platform’s recommended YouTube Short videos in 15-minute intervals in order to better understand how the algorithm floods the feed with this content.

In one 15-minute session, after watching CoComelon’s “Wheels on the Bus” video, more than 40% of the videos watched appeared to contain AI-generated visuals. The Times manually reviewed each of the videos, some of which clearly featured YouTube’s label for “altered or synthetic content,” while others displayed visual errors or other distortions in the background. The AI-generated content wasn’t always obviously flawed, and some videos were sufficiently seamless to evade casual detection by the human eye.

To further vet the videos, the Times used an AI detector to determine with high probability that the videos, and in some cases the music and voices, were AI-generated. The Times also found that the same AI videos or channels tended to pop up repeatedly in multiple sessions. Mitch Prinstein, a professor of psychology and neuroscience at the University of North Carolina at Chapel Hill, further questioned the addictive nature of these videos.

“These do strike me as something that are made to really get in your head,” Prinstein said.

“It may even be harmful, but we need more data.” Prinstein explained that due to the dramatic proliferation of AI content in just the last year alone, it’s hard to keep up with the research findings. While the jury is still out when it comes to definitive long-term health effects, and low-quality videos aimed at children existed on the platform long before the rise of AI, experts fear that the sheer volume of these videos now may cause displacement, in which children lose out on opportunities to engage with media content or other activities like reading and interacting with others that could bring them more benefits.

The vast quantity of AI content is already upending the feeds of all kinds of social media users. Elsewhere on YouTube, older children can easily find disturbing videos depicting abusive and violent scenes featuring popular children’s characters. Facebook pages are uploading altered images that misrepresent historical events.

AI avatars in the form of “doctors” on Instagram are pushing bogus wellness advice and products. In November, TikTok said it had labeled over 1.3 billion videos as AI-generated. Some platforms have begun to tighten their rules around the use of these tools.

Pinterest has features that allow users to select how much of this kind of content they want to see. TikTok also said it was testing ways that would enable people to reduce the amount of AI content in their feeds. Last month, YouTube announced new controls that allow parents to set time limits on YouTube Shorts.

The Times requested comment from YouTube on its policy around AI videos for children, and shared five channels as examples.

In response, YouTube suspended all five accounts from the YouTube Partner Program, meaning they are ineligible to earn ad revenue on YouTube and are blocked from appearing on YouTube Kids. The Times also sent three examples of hyperrealistic AI videos on YouTube Kids, which YouTube then removed from the app. YouTube also stated that it removed one video the Times shared for violating child safety policies.

The AI video showed animals being chased and turning different colors once inoculated with a syringe.

However, similar videos can still be found on the channel.

Source Verification

Corroboration Score: 1This story was independently reported by 1 sources. Click any source to read the original article.

Comments

0 commentsAll five 'seeds of life' found on asteroid in stunning clue to how life began on Earth

3 Oscar-Winning Movie Masterpieces to Watch on Prime Video

Related Articles

Science

Science【AICC Original Article】Appreciating the Grand Canal through Rows of Willows: A Time-honored Cultural Pulse Reinvigorated

Science

ScienceOne Dead, Three Trapped After Kericho Building Collapses

Science

Science